Extreme Speech & the Ethiopian Elections

There has been interesting shift starting from a utopian discourse of digital technology as a way of bringing democracy and development, into its dark side... Increasingly we are seeing digital technology being framed based on its negative effects.

-Dr. Matti Pohjonen

This week Chipo spoke to Matti Pojohnen about extreme speech and content moderation online. Matti works at the intersection of digital anthropology, philosophy and data science. He has taught at SOAS in Global Media & Post National Communications and is now a postdoctoral researcher at the University of Helsinki where he is working on global and comparative dimensions of platform accountability. Their conversation raises astute questions about the global future of content moderation. Listen to it here.

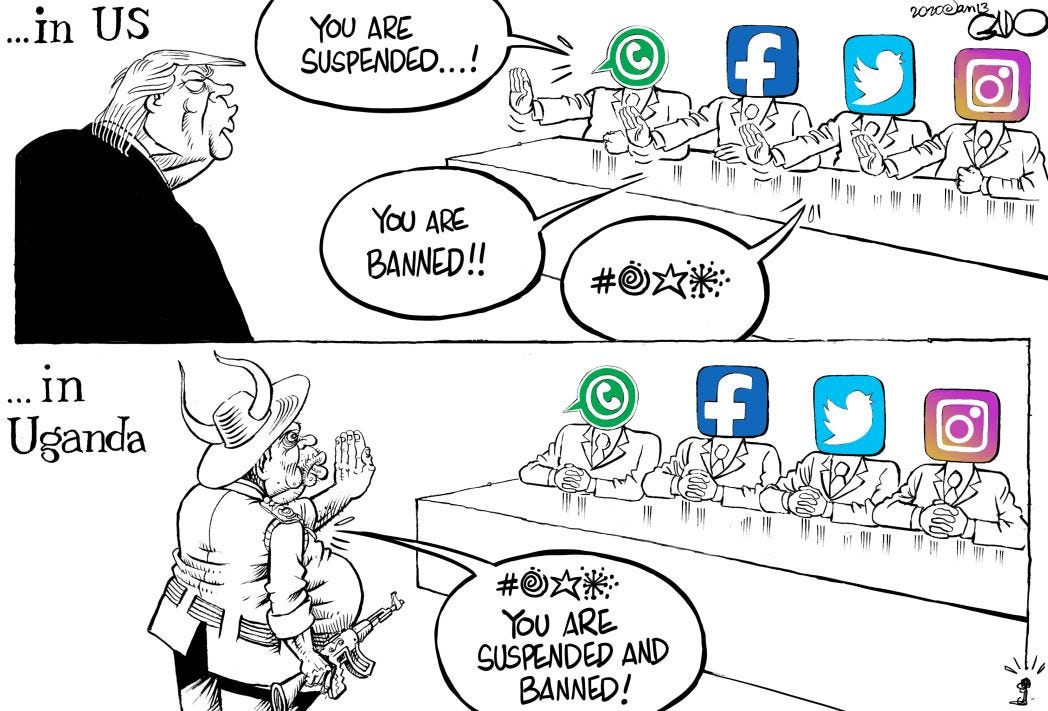

As Adam Sataranio points out, Silicon Valley has a problem with double standards. 2021 has seen news sites disproportionately filled with speculation over how Facebook’s oversight board will deal with Donald Trump, whilst uneven and incoherent action is taken to compromise leaders inciting violence in the rest of the world. It is a stark reminder that these platforms - so intimately woven into our lives they become invisible - are multinational corporations serving corporate interest; and in certain contexts public scrutiny has higher market value.

Furthermore, the action taken over Trump has forestalled any future reluctance from platforms to intervene in extreme speech and undermined their claims to be neutral conduits of communication. On this, I’d recommend Tarleton Gillespie’s Custodians of the Internet where he establishes the paradox of content moderation: though platforms understandably disavow it, it is their essential, definitional and constitutional work.

Last year, Ethiopian activists, journalists and civil rights organizations wrote an open letter to Facebook condemning their failure ‘to prevent the escalation of incitement to violence on its services’. Now, in the build up to what Prime Minister Abiy Ahmed has promised would be the country’s ‘first attempt at free and fair elections’, Facebook has been working to cement a responsible public image. Between March 2020 and March 2021, they claim to have removed 87,000 pieces of hate speech. Last week they removed a network of fake accounts associated with Ethiopia’s Information Network Security Agency, which is meant to be responsible for monitoring telecommunications and the internet. Read more on Facebook’s ‘election integrity work’ in Ethiopia here. Read about some of the most common misinformation circulating online here.

Needless to say, it’s not all on Facebook’s shoulders to keep the elections fair. 4/10 regions won’t be voting at all this week- one of which is Tigray, where communications blackouts and blocks on humanitarian aid have been reported amongst the ongoing conflict. As such, ‘the government helped create conditions where disinformation and misinformation can thrive’. That quote is taken from The Washington Post, who have analysed 500,000 tweets to get a sense of the ongoing information conflict - here. It was also reported in May that social media sites across the country were restricted. If you haven’t already, read Deirbhile’s piece on the increased use of internet shutdowns here.

I’ll leave you with some food for thought in the form of this cartoon by Gado, which Matti mentions in the podcast:

Thank you so much for reading and please get in touch with any thoughts. Enjoy the podcast!

Eliza

If you don’t already get this newsletter in your inbox, please sign up here.

Spotlight

Article 19 works for a world where all people everywhere can freely express themselves and actively engage in public life without fear of discrimination.

Reset is an organization that works to tackle the flaws at the heart of social media, advocating for stronger enforcement of rules and standards, and regulating the surveillance capital business model. Alaphia Zoyab is the Advocacy Director; read her article on Silicon Valley’s Double Standard here.

Mythos Labs uses entertainment, comedy and technology to counter harmful narratives and disinformation online. Listen to the Global Digital Futures podcast episode Digital Strategies for Counter Narratives for further insights on this topic.

Resources from the podcast

Learn more about the role AI will play in content moderation in this journal article, No amount of “AI” in content moderation will solve filtering’s prior-restraint problem.

Find Matti’s research project on global and comparative dimensions of platform accountability at the University of Helsinki here.

Other tech news I’ve been reading this week:

Having blocked Twitter in Nigeria, President Muhammadu Buhari has signed up to Koo. This is Koo’s first expansion out of India, where it brands itself as a government-friendly version of Twitter and has become home to right-wing populists. Read about it at Rest of World here. Read about the Nigerians fighting the Twitter ban in Al Jazeera here.

A horrific report has been published by Human Rights Watch about the prevalence of digital sex crimes in South Korea, said to be the global centre for illegal filming and sharing of explicit images and videos. The FT reports that ‘digital technologies, including high-speed streaming and encrypted chat rooms, have provided new vehicles for propagating deeply embedded gender discrimination’. You can find the report here. Listen to the Global Digital Futures podcast episode Molka & Online Violations of Women in South Korea here.

Connect with us: Twitter | Instagram | Spotify | Apple Podcasts

We want to hear from you. Tell us what you think of this newsletter. Email hello@globaldigitalfutures.com.

Or connect with us on Twitter | Instagram | Spotify | Apple Podcasts

If you don’t already get this newsletter in your inbox, please sign up here. You can also read past Global Digital Futures columns.